What Are We Measuring? Rethinking Mock Exams

You don't run a marathon to prepare for a marathon

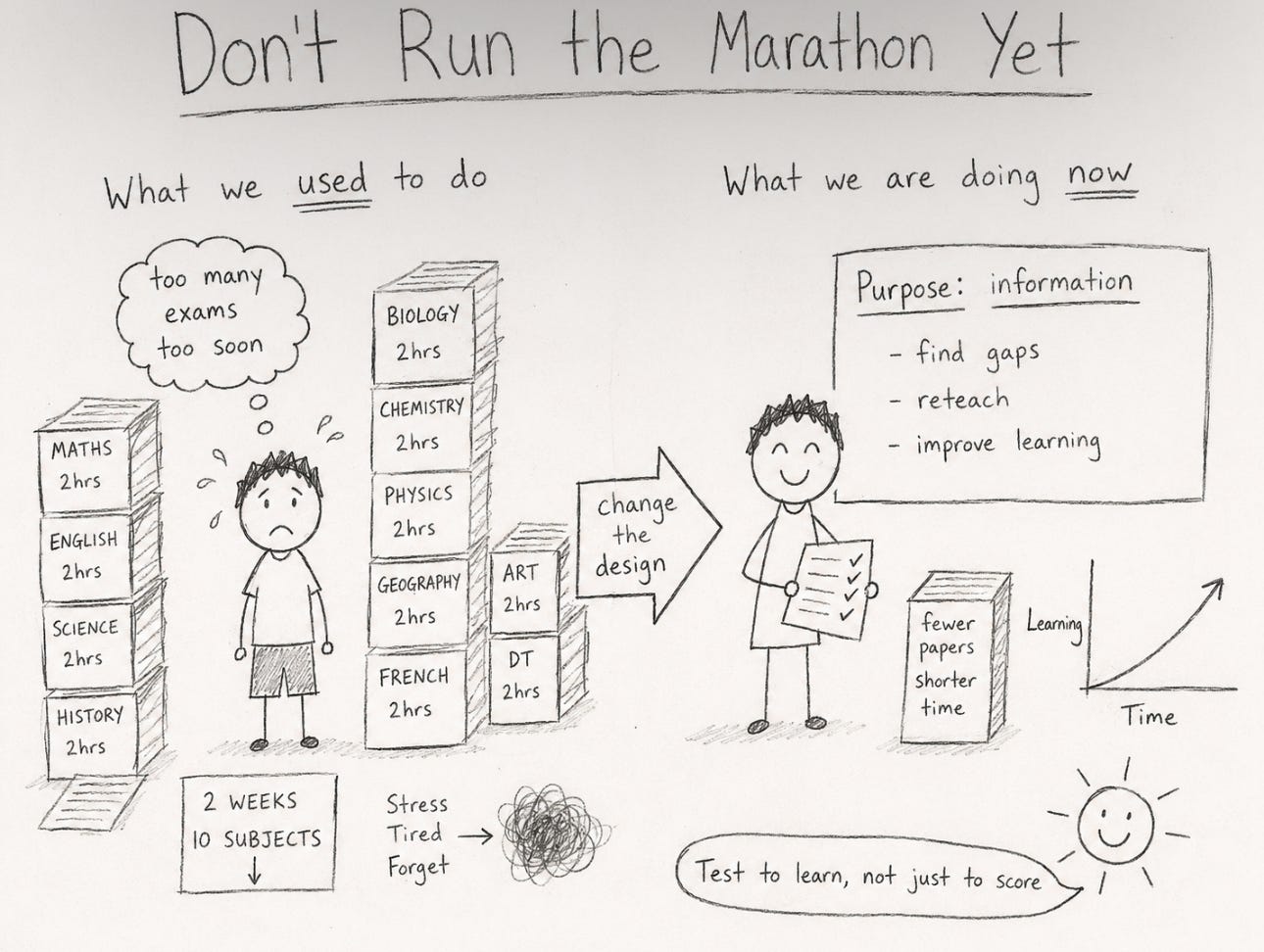

Next week, our Year 10 IGCSE students begin two weeks of mock examinations. Traditionally, this has meant a heavy window of papers across 8 to 10 subjects, many at full length, compressed into a sprint far tighter than the actual examination series, which runs across two months or more. The intent has always been positive: give students a realistic taste of exam conditions, generate a meaningful data point at the halfway stage, and feed that information back into teaching, and of course, report back to parents. Though it has never been just about getting a letter to drop into the mark book.

For a few years now, we have questioned the process as these end of year exams approach. But then the busyness of school life carries everyone forward and nothing gets done. This year was different. A colleague who had recently stepped up to lead on internal examinations took the time to properly review what we were doing and, crucially, acted on it before the window closed. Her review asked a simple but difficult to answer question. What is this window of testing actually for?

The Year 10 examination window is not primarily about generating grades. Its value is in producing information that improves learning. It should function as a diagnostic mid-course checkpoint: generating valid, reliable and actionable data that helps departments identify strengths and gaps, enables targeted feedback and reteaching, and supports students in recognising their own misconceptions and areas requiring further retrieval and revision. Once you’re clear on the purpose, the design of the assessment needs to follow from it. When we looked honestly at what we had been doing, the design and the purpose did not quite match up.

The evidence on testing is clear and well-established. The testing effect is one of the most replicated findings in cognitive science. Retrieval strengthens memory, and when students are required to pull knowledge from long-term memory, learning improves. Frequent, low-stakes retrieval helps information stick, strengthens recall pathways and makes forgetting less likely.

But running a compressed mock season halfway through a two-year course is a different proposition entirely. The research base is strongest for short, focused, regular retrieval, not high-stakes replication of the final exam experience. When we move from low-stakes retrieval to full formal papers under timed conditions, we are no longer primarily strengthening memory. We are assessing performance under pressure, stamina, stress tolerance, sleep management and emotional regulation. The construct shifts and under those conditions, what students display is not necessarily their learning, but more their capacity to cope.

Daisy Christodoulou draws on a memorable analogy in Making Good Progress: you don’t train for a marathon by running a marathon every training session. The full distance is the test of preparation, not the method of building it. The same logic applies in classrooms. You do not prepare students for high-stakes exams by placing them under high-stakes exam conditions simply because it appears to be good preparation. The conditions of the race need to be introduced gradually, once the knowledge base and the conditioning that would allow genuine performance are secure enough to be meaningfully tested. What we had in Year 10 was, in effect, a training programme that opened with the full race half-way through training.

If a Year 10 student sits multiple two-hour papers across many subjects within a two-week window, the data does not purely reflect curriculum mastery. It reflects coping under artificial compression. For many students, this window is actually harsher than the real examination series, which runs concurrently for our Year 11, 12 and 13s, and where papers tend to be better spaced, study leave is extended and sequencing is far clearer. Some Year 10s have genuine IGCSE exams sitting inside their mock window, adding a further layer of pressure that has nothing to do with what any teacher is trying to measure.

Which returns us to the validity question: are we measuring what we intend to measure?

Domain coverage extends that concern. A full past paper generally looks to sample a huge chunk of the specification. But midway through Year 10, not all content has been taught or secured. When a student underperforms on an unseen or lightly covered unit, the signal gets blurred. Is that a knowledge gap? A sequencing issue? Poor instruction? Premature exposure to material that simply hasn’t been retrieved enough yet? The resulting grade can look authoritative while masking a much more complicated diagnostic picture.

This is exactly where the design principle from our review focused. If the purpose is a valid, reliable and actionable mid-course checkpoint, then the volume and length of assessments should match that purpose, not exceed it. The change was straightforward: fewer papers, capped timing. There was some resistance, but definitely progress. Adapted papers can still preserve exam-style structure, command words and mark weighting. They can still include extended analytical responses and evaluative questions. But by reducing the overall load, they produce cleaner data, protect student wellbeing and give departments something they can genuinely act on, rather than a dataset distorted by fatigue and artificial compression.

Wellbeing is not a second order consideration here. Performance science shows that acute stress can sharpen attention in short bursts. Sustained stress across multiple high-demand tasks doesn’t: it degrades working memory, disrupts sleep and erodes emotional control. When students are revising simultaneously for ten or more formal papers, cognitive load does not just accumulate, it compounds. And they will cram the night before each paper, producing a performance shaped by short-term retention rather than secure knowledge. Ebbinghaus’ forgetting curve tells us exactly how quickly that fades. We may have believed we were building resilience. We were more likely generating noise in our data and unnecessary stress for our students.

There is also a sequencing argument that underpins all of this. Year 10 is about knowledge security and skill development. Year 11 is more about exam replication and stamina. Conflating the two too early is a misalignment. Students need to learn how to think within a discipline before they are asked to endure the full performance environment of the final series. The conditions of the exam are something to be introduced gradually, not dropped on students at full weight before the knowledge base that would allow them to perform is anywhere near secure.

The strategic question, then, is worth thinking about. What are we actually trying to answer at this point in the course? Forecasting predicted grades? Identifying intervention needs? Checking knowledge security? Building exam literacy? Testing to strengthen memory? These are different purposes, and they require different assessment designs. A full past paper at full length is not the only marker of seriousness, and treating it as though it were conflates performance with learning in exactly the way the research warns us against.

What our review gave us was not just a reduced exam load, though it did produce that. It gave us a clearer answer to the strategic question, and an assessment model designed intentionally around that answer. Getting there required someone to ask an uncomfortable question early and see it through, and we are genuinely grateful she did. The Year 10 window now has a defined purpose: a diagnostic mid-course checkpoint designed to produce information departments can genuinely use, feed a strong reteaching and retrieval cycle, and give students a meaningful picture of where they actually are, not a bruising preview of what it feels like to attempt the race before the training is done. We will find out next week whether it delivers on that. But at least now we are asking the right questions before the papers go out, not after.

Assessment should strengthen memory, clarify gaps and guide teaching. A tighter, more deliberate model produces better data, stronger learning and calmer students.

Midway through the course, you really don’t want to be running a rehearsal for survival. If you want maximum effectiveness from an end of year assessment, you have to use it as an opportunity for precision.